statement

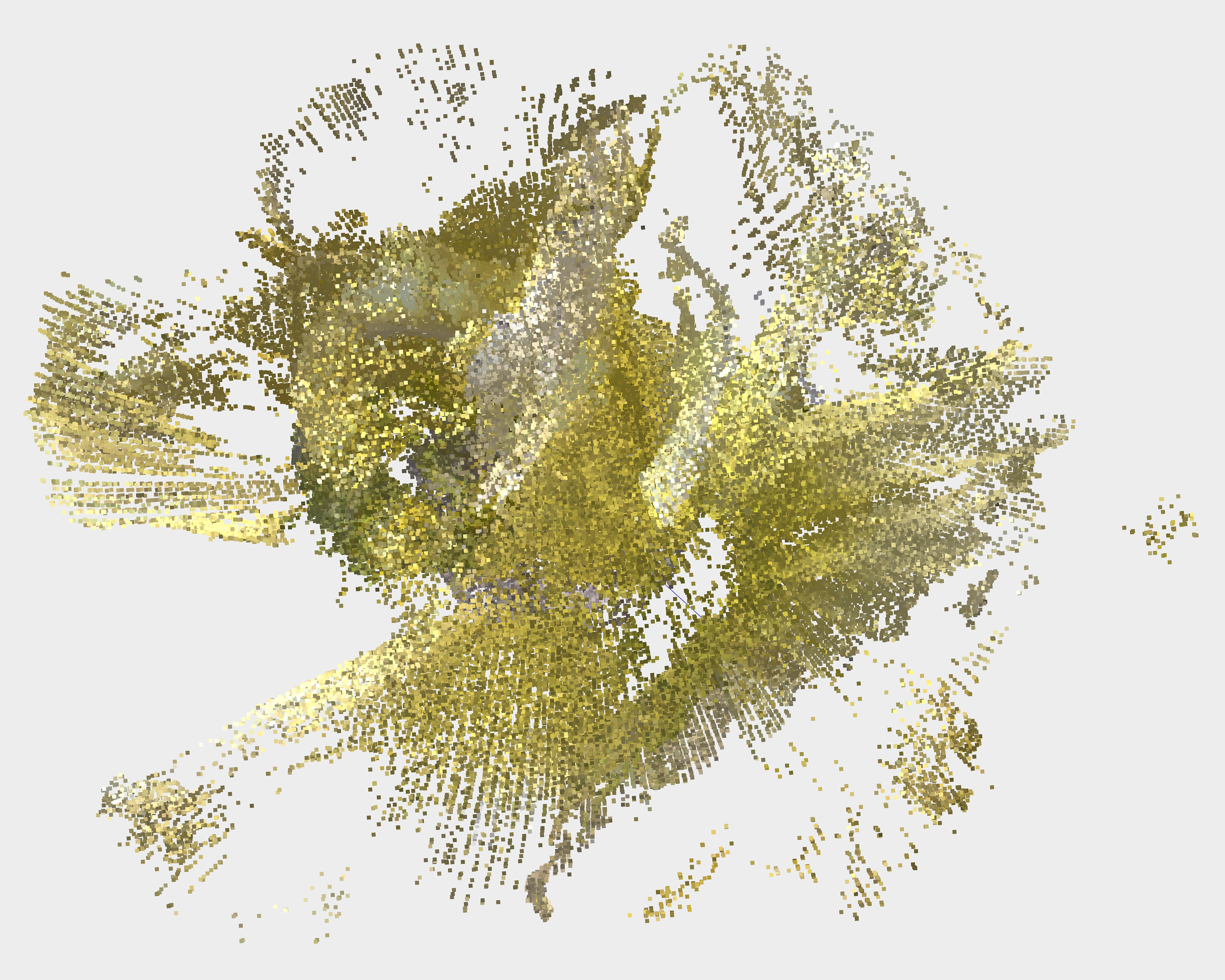

How do technologies such as 3D-scanners, modeling software, and artificially intelligent nature apps depict the ecological world? Mostly in varying degrees of abstraction with poetic outcomes. This is nature through the eyes of a machine, an emergent observer learning to name what it sees.

Emerging technologies are currently capable of interpreting only certain aspects of the physical world. Fei Fei Lee, the Director of Stanford University’s Artificial Intelligence Lab, describes these limits in a New York Times OP-ED titled How to Make A.I. That’s Good for People:

“… in my lab, an image-captioning algorithm once fairly summarized a photo as “a man riding a horse” but failed to note the fact that both were bronze sculptures. Other times, the difference is more profound, as when the same algorithm described an image of zebras grazing on a savanna beneath a rainbow. While the summary was technically correct, it was entirely devoid of aesthetic awareness, failing to detect any of the vibrancy or depth a human would naturally appreciate.”

These impediments become even more pronounced when technologies observe 3-dimensional space (instead of the 2-dimensional photographs used in Dr. Lee’s lab.)

Emerging technologies are currently capable of interpreting only certain aspects of the physical world. Fei Fei Lee, the Director of Stanford University’s Artificial Intelligence Lab, describes these limits in a New York Times OP-ED titled How to Make A.I. That’s Good for People:

“… in my lab, an image-captioning algorithm once fairly summarized a photo as “a man riding a horse” but failed to note the fact that both were bronze sculptures. Other times, the difference is more profound, as when the same algorithm described an image of zebras grazing on a savanna beneath a rainbow. While the summary was technically correct, it was entirely devoid of aesthetic awareness, failing to detect any of the vibrancy or depth a human would naturally appreciate.”

These impediments become even more pronounced when technologies observe 3-dimensional space (instead of the 2-dimensional photographs used in Dr. Lee’s lab.)

The images to the left are point clouds and meshed STL files of 3D-scanned spring flowers. While beautiful in color and form, they lack the complexity needed to recognize the flowers as individual species. These images represent a generalized nature, the essence of spring.

The artworks on this site focus on technology’s struggle to interpret the natural world. Designed by humans for humans, these technologies may lead to a general intelligence capable of an autonomous existence. How will this intelligence encounter the interconnected web of life that is Earth?

In a world that is increasingly observed, analyzed, and constructed by computers, it is important to understand the limits of our technology. Natural history—the study of plants and animals—is a pursuit concerned with observation. Because of their limits, the technologies of today would make for terrible naturalists. Could we design a more livable future if we redirect the gaze of these technologies, program them to perceive the intricacy of life, biodiversity, endemic ecosystems?

The artworks on this site focus on technology’s struggle to interpret the natural world. Designed by humans for humans, these technologies may lead to a general intelligence capable of an autonomous existence. How will this intelligence encounter the interconnected web of life that is Earth?

In a world that is increasingly observed, analyzed, and constructed by computers, it is important to understand the limits of our technology. Natural history—the study of plants and animals—is a pursuit concerned with observation. Because of their limits, the technologies of today would make for terrible naturalists. Could we design a more livable future if we redirect the gaze of these technologies, program them to perceive the intricacy of life, biodiversity, endemic ecosystems?